Basic Photogrammetry Workflow tutorial

Photogrammetry = photography based software 3D model creation

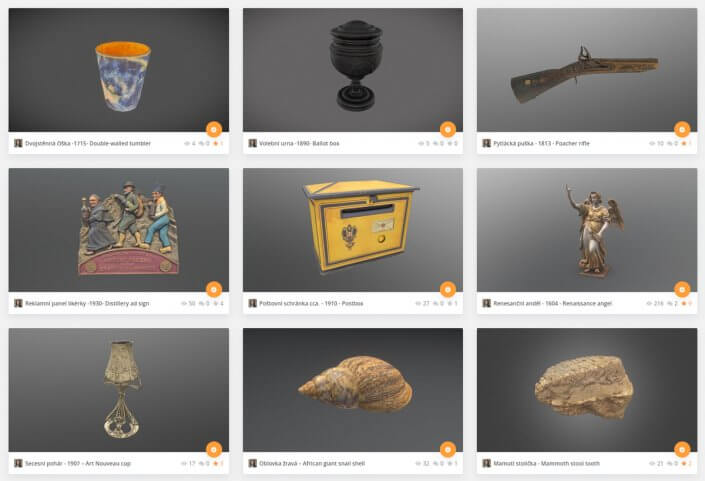

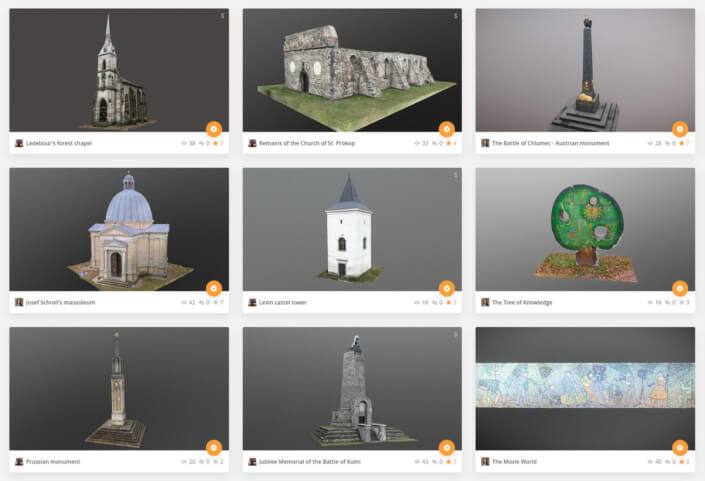

0) choosing subjects

anything unique and well accessible in your surroundings – nature features, location specifics, vintage items

avoid reflective or translucent surfaces

easy to start with: cut tree stumps, sculptures, larger rocks

1) photo capture

Optimal: cloudy weather or soft multi-light with no shadows or quality CPL ringflash

Optimal DSLR setting: 1/125 or shorter, ISO 200 or lower, F/11 or higher (but not your max aperture)

The goal: no depth of field, no motion blur – sharp photographed subject with no shadows, no reflections, light falloff or flares and no noise from all available angles

Each point of the subject should be visible in at least 3 photos of the, ideal number of shots could be anywhere in between 40 (small items) to thousands (architecture)

The basic way is to start moving around the subject in circle and take a pic with each step. Then repeat from different angle (go lower and higher).

mid-tier+ smartphones can be used (but lock WB and exposure)

drone can be used, but makes things harder to learn

2) preparing photos

after downloading to your pc/laptop, delete blurry shots

process raws to full size jpgs in Lightroom / Darktable (free)

equalize white balance, further reduce contrast, shadows and highlights, lower digital noise as needed, sharpen a bit, lens corrections

feel free to check and test-process some of my photo datasets https://skfb.ly/opWAG

3) model creation

import photos, align, generate mesh, optimize (reduce the size as needed), unwrap & texture, export .FBX

The workflow is quite similar for each soft. Their UI could seem overwhelming at first, but there’s always Wizard guide mode to help first steps.

RealityCapture is PPI (pay per input photo megapixels, usually ~4$ for 200 fullframe photos models)

Agisoft Standard is $180 (unlimited license)

Photocatch is PPI after 10 exports, Mac only

3DFZehphyr is free <50 shots per model (can do for simpler subjects)

freeware alternatives not worth it in 2022 (sadly)

CUDA nVidia GPU needed (Agisoft can use OpenCL @AMD cards, but very slow, Phocathch can use M1 Mac chips), GTX 1060 is OK-ish

16GB RAM minimum

4) cleanup

ZBrush or Blender (free) – 3D “photoshop” retouching distorted edges (smoothen), texture errors (clone stamp)

5) publish

to Sketchfab (<50mb preferred) or Nira (unlimited)

or deliver to client

or sell at Sketchfab store, turbosquid…

For 3D scans, the preferred Sketchfab editor setting is “Shadeless”. To recover material properties, such as light absorption or metalness, you need to do a PBR setup. Unreal Engine 5 offers PBR presets and can autotomize the process. Another recommended settings are adding Sharpness, some SSAO, Bloom, Grain and to adjust Exposure as needed.

…text revision v0.92 (Jan 22 2022)

Compared to 360° virtual tours style, Photogrammetry is more about focusing one point from hundreds of angles than focusing everything around you from one point.

Here’s an overview of my camera position for the wreck scan: https://drive.google.com/drive/folders/16b5GKL9_L44WJDT_h53EYLhWYLittRAh

and also a Lightroom preset. It’s very useful to remove as much of shadows and highlights from source photos as possible.

Raw is a must.

Best value option for camera would be a secondhand Nikon D810 (Z7 II is great, D600 budget-budget pick) with Sigma 24-105mm f/4 DG OS HSM Art lens. Lens are not super important, as every lens is very sharp at F/8 – F/16 range

Laptop tethering for studio / table top setups.

When working for a museum or art gallery, professional model presentation can be a challenge.

Sketchfab is the standard for this, but you want your models well optimized. Museum can obtain Premium plan for free, which removes SF logo and allows for better customization.

With that you can also use SF for in-gallery presentation on TV screen (the data only loads once, then acts offline). Full offline premium 3D player from SF coming in near future.

Nice fullscreen autoload can look like this. You can then setup Windows kiosk mode and auto enter fullscreen on startup.

For online presentation of many models, choose NIRA for >100M poly and >150MB models (they share free invited on their LinkedIn).

Basic workflow overviews

https://www.youtube.com/watch?v=pGyoM3tujlc&t=331s

https://www.youtube.com/watch?v=tw6wNNEbH_M

https://www.youtube.com/watch?v=56_PQbzT2Gg

https://www.youtube.com/watch?v=2kvT93QlFto

Very tech savvy guy to follow later https://www.youtube.com/watch?v=pGyoM3tujlc

Probably the best app to start with is https://www.capturingreality.com – can be used for free, you only pay if you decide to export the model (PPI).

They have in-app wizard to help. It also has direct Sketchfab uploading.

They have good tutorials of their own https://www.youtube.com/channel/UCIfMxiWnFHwmxrm2nNWK2hg/videos

If you are on Macbook, you could try https://developer.apple.com/augmented-reality/object-capture/

Reviews say it’s good, not not fully released and probably missing many features.

You can also download some of my ready-made photo datasets for learning the process https://skfb.ly/opWAG

Then there’s Blender https://www.blender.org

For post-process retouching (think of it as Photoshop after doing most work in Lightroom). Free, but on the way to become an industry standard.

For publishing, everybody uses Sketchfab, with many features for free and also some pro-level

commercial abilities on higher tiers (big brands use it as well).

For drones, the workflow is the same, you just use it to reach places you couldn’t on foot. It’s good to mix drone pics with DSLR for maximum quality.

You need to take photos with drone, not videos. Video extracted frame are much lower quality and often blurred.

Value choices are: Phantom 4/Pro, Air 2/S

CPL filter is mostly use for high-reflective surfaces or natural foliage.

Tripod is useful wherever you cant get enough light to have ISO 200, F16 and 1/160 (with this you can go handheld safely.).

For some cases, it possible to use a ringflash. The best is Godox AR400.

Basic cross-polarized setup high-quality macro scans / shiny-ish objects on rotating table

Basically you want a tripod, black background + quality ringflash with polarization filters http://www.immersiveshooter.com/2020/05/11/photogrammetry-photography-guide-ring-flash-photography/ + shutter synchronized rotation plate. This a mid-tier, excellent investment/quality option. With that, you can get a professional ~180 shots scan within 15minutes. With 3 cameras and 3lights, you could get to 5minutes, but the setup is a bit more demanding. There are also some commercial fully automated rigs available as well..